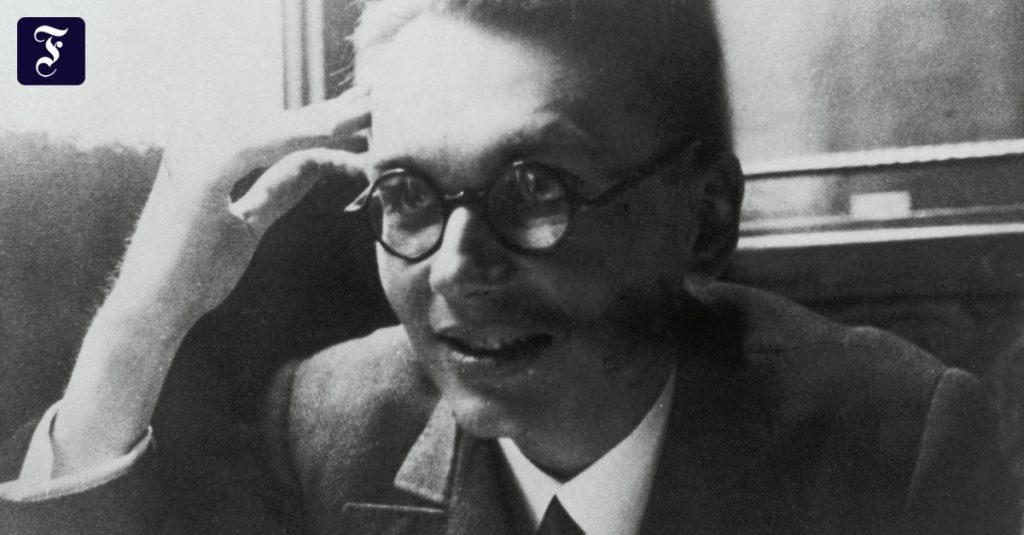

IThis year we celebrate the 90th anniversary of the pioneering work of Kurt Gödel, who founded modern theoretical computer science and artificial intelligence (AI) theory in 1931. Gödel sent shock waves through the academic community while demonstrating the fundamental limits of computing, artificial intelligence, logic, and mathematics itself. This had a major impact on science and philosophy in the twentieth century.

Born in Brno (present-day Brno), Gödel was 25 years old when he wrote his thesis in Vienna. In the course of his studies, he designed a universal language to encode any operations that could be formalized. It is based on integers and allows representation of data, such as axioms of basic arithmetic operations and provable theorems, as well as programs, eg chain of operations on the data that produce evidence. In particular, it allows to formalize the behavior of any digital computer in an intuitive form. Wadell constructed famous formal, self-referential, and undecidable statements, which imply that the content of truth cannot be determined by arithmetic.

By doing so, he finally determined the basic limits of proof of algorithm theory, computation, and all kinds of computational artificial intelligence. Some even misunderstood its results and believed that it demonstrated the superiority of humans over artificial intelligence. In fact, much of the early AI in the 1940s to 1970s was concerned with theoretical proofs and inference in the Gödel method (as opposed to the inductive approach to machine learning prevalent today). To some extent, it was possible to support human specialists with deductive expert systems.

Frej, Leibniz, Turing

Like almost all great scholars, Dowel stood on the shoulders of the others. He combined Georg Cantor’s famous distillation trick from 1891 (which showed that there are different types of infinity) with fundamental insights from Gottlob Frege, who introduced the first formal language in 1879, and from Thoralf Skolem, who was a recursive primitive in 1923. These are the basic building blocks of arithmetic), as well as by Jacques Herbrand, who recognized the limitations of Skolem’s approach. This work, in turn, expanded the pioneering ideas that the encyclopedic scientist and “first computer scientist” Gottfried Wilhelm Leibniz had much earlier.

Leibniz had “Calculemus!” Specific Enlightenment quote: “If there is a disagreement among philosophers, they will no longer have to argue as accountants. All they have to do is sit with their pencils and plates and say something to each other. […]: Let’s do the math! In 1931, Wedell showed that there are fundamental limits to what arithmetic can decide.

In 1935, Alonzo Church derived a corollary to Wadell’s result: the decision problem has no general solution. To do this, he used his alternate universal language called Untyped Lambda Calculus, which forms the basis of the influential programming language LISP. In 1936, Alan Turing, in turn, introduced another universal model: the Turing machine. With this, the above conclusion was drawn again. In the same year 1936, Emil Post published another world independent model of computing. Today we know many of these models. But it was supposedly Turing’s work that convinced Woodell of the universality of his own approach.

Thursday at 12 noon

Many Gödel command sequences were a series of multiples of integer-encoded memory contents. Wadeal did not care that the computational complexity of such multiples tended to increase with memory size. Similarly, Church also ignored the context-dependent spatiotemporal complexity of the underlying operations of his algorithms – the computational effort required to perform a given operation is a priori unlimited.

“Tv expert. Hardcore creator. Extreme music fan. Lifelong twitter geek. Certified travel enthusiast. Baconaholic. Pop culture nerd. Reader. Freelance student.”

More Stories

The closest supernova to Earth in years produced a surprisingly small amount of gamma radiation

What to do if your period suddenly stops?

Limbecker Platz in Essen celebrates its reopening – there's a problem